.

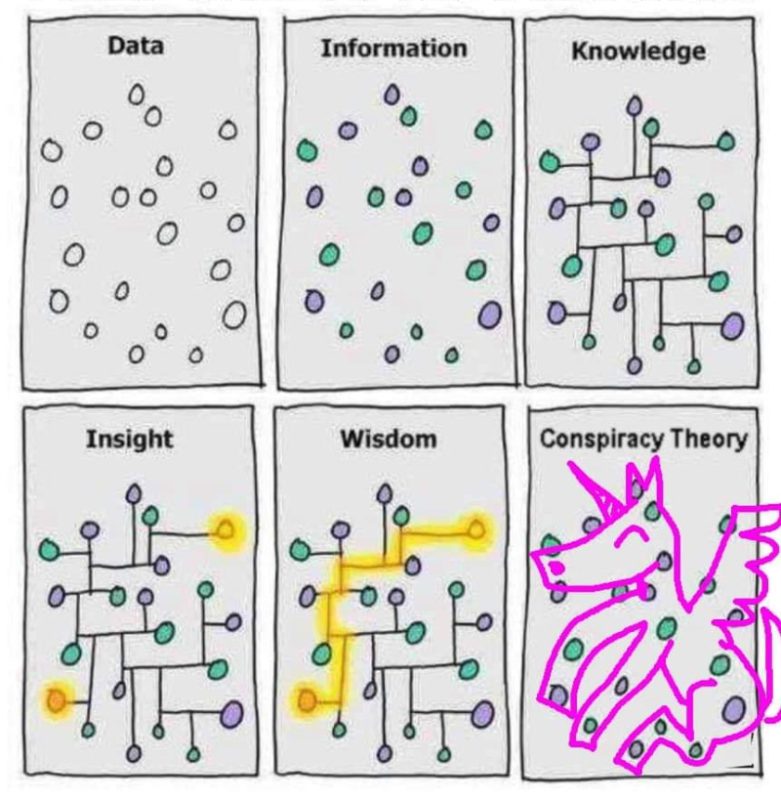

Conspiracy theory

/kənˈspɪrəsi ˈθɪəri/

Noun: A spoiler.

Q: What’s the difference between a conspiracy theory and a fact?

A: About six months.

Ah, I joke, I joke… But do I really?

There are 100 million psychopaths in the world. Another 500 million or so people, who don’t quite fit that definition, operate with a similarly catastrophic lack of empathy. It is this unsavoury fact that creates much of the tension in the game of life and our cultural obsession with good versus evil.

Tim Foyle writes brilliantly on this topic, noting the simple and obvious wisdom of keeping a cautious eye on how these less than compassionate humans are spending their time. Psychopaths, he explains, find it quite peculiar that the rest of us limit ourselves to honest and kind dealings, when lying in public and colluding in private are so much more effective.

That psychopaths and sociopaths think and behave this way is not a ‘theory’, but well established in psychiatric literature and cultural history. It’s just really not nice to think about.

Collaboration theorists

I have heard many people joke lately that they have taken to consulting their ‘conspiracy theorist’ friends regularly, to find out what’s coming next. They have often proven themselves more accurate than the carefully curated, stakeholder-driven messaging that comprises too much of our news media these days.

Perhaps it’s always been this way, but as time goes by we perceive the game more clearly or cynically.

Still, your conspiratorial friends can be very handy. They are at least attempting to stay across what key members of the low empathy club are doing with their days and how they might be collaborating. Sociopaths like to keep busy and have friends too, you know?

However, many of us also have a friend or acquaintance who really is hanging off the edge. Schizoid traits, as well as related illnesses or the over-use of marijuana, can sometimes cause deeply distrustful, paranoid thinking. Wild linkages can be made between genuinely unrelated things.

But at the opposite extreme is the person who is so trusting that they believe everything they read or hear, or imagine that others only ever have their best interests at heart. This naivety is much less eye-catching, but very common and equally dysfunctional.

Reality bites

Between these extremes lies a wide and colourful expanse of relative truths — the mix of savoury and unsavoury nibbles that comprise the tapas of life.

So the great challenge for each of us is in learning to discern when our perceptions are accurate, or inaccurate. How can you be sure?

How can we tell if a conspiracy theory is the result of seeing false patterns or trusting the wrong information, or an accurate assessment of distasteful but genuine collusion practice?

Of course most of us believe ourselves to be rational. It can be tempting (whilst foolhardy) to laugh derisively at those who make a different assessment of a situation than we do. Poor George, he’s lost it.

But clear thinking, it turns out, is both capacity and art.

The art of accurate perception

For the vast majority of us, getting our thoughts straight requires ongoing, intentional practice. That’s if we care about clear-mindedness at all, and many don’t.

But hold on a minute. Why has Mother Nature even created beings who can be so easily misled? Surely clear thinking is such a survival advantage that evolution would have carefully selected for it?

Well, it kind of did, but in many ways it absolutely did not.

The problem is that Mother Nature also tried to be efficient by endowing us with mental shortcuts. We have complex lives so need to be able to process a lot of information, fast. But you know what they say about taking shortcuts…

Cognitive bias

To become a clear thinker, an essential starting point is to be well-versed in evolution’s cognitive shortcuts, otherwise known as biases.

Biases are faulty perceptions that arise from the best of primeval intentions — survival. And there are hundreds of them. Here are a handful:

- People who have the same eye and hair colour as you are safer to be around than those with a different appearance > In-tribe bias or stranger-danger. In our biological history, tribe members were likely to be safe but strangers might not be, so nature endowed us with an instinctive caution around people who look different. In a multicultural world, this shortcut turns upon itself. Not only is it wrong, but destructive.

- Large men are better job candidates than small men > Fitness bias. Big strong hunters are more valuable. However, they are not more valuable for modern things like doing accounts, or sales, or teaching, or parenting.

- What my tribe members say is likely to be true > Trusted source bias. Your tribe member probably really did just see the river rising fast, so it’s a huge survival advantage to instantly believe her and run for high ground, rather than wasting time ‘fact-checking’.

Modern media mouthpieces feel like they are our tribe members relaying truth, but in fact their messages have been shuffled through the minds of a whole chain of stakeholders and had several edits, so will inevitably be distorted a little or a lot.

One simple way to observe this news phenomenon is when you travel internationally. Watch news stations at your destination reporting on what’s been happening in the place you just left. Hang on, that’s not what happened. I was just there.

This is why citizen journalism is becoming increasingly popular. The impact can be quite dramatic, like the British man who didn’t trust his local broadcaster’s news reports about the war in Kyiv, so he flew there, walked around town, and reported back live. His amateur journalism may not have been a perfect portrayal, but it did make it hard to argue with his criticisms of his local broadcaster.

Gell-Mann Amnesia

This is my personal favourite bias! I have a poster of it on my wall, though I keep forgetting it’s there. It really takes discipline to get around this one, because it goes like this:

- You read an article on a subject that you happen to know a lot about. It’s your expertise or a passion you’ve studied in-depth. The journalist is paid per article (speed) and rewarded by clicks (attention hooks) so the piece is rubbish. It’s obvious to you that it’s riddled with errors and misunderstandings.

- You recoil in horror, knowing that many people will probably believe this crud. You swipe indignantly to the next article in search of something better.

- The next article is on an interesting topic that you know very little about. You gleefully read and absorb the information with the attitude of “Oh really, is that so? Fascinating!”

We tend to believe unfamiliar information from the same source that spouted verifiable rubbish on a familiar topic moments earlier. We reflexively default to trust. Doh!

More biases

Lack of information or innocence — a very simple bias. Youth, for example, guarantees this. Learning takes time, so about half of our population in any given year lacks the life experience to see things clearly.

Past-present bias. Many people acknowledge that true evil was perpetrated historically, yet irrationally imagine that those traits don’t apply currently, as if humanity cleansed itself in a single generation. Negative things get pushed further back along our mental timeline, and positive things literally feel closer.

Related is the common belief that humans in past generations were quite stupid, and our intelligence is really only blossoming now. (That perspective, ironically, is evidence against itself.)

People laugh at the follies of the past, arrogantly oblivious to the inevitability of our current beliefs being rightly viewed as follies in the future.

“Those 2020s humans had no clue! Get this, they actually used to think [Add belief here]!”

Against the grain

Everyone else is doing it — Group-think, Lemming bias, or Monkey-see > Monkey-do.

Humans have an incredibly strong, nearly irresistible subconscious compulsion to align their thoughts, words and behaviour with the perceived majority — to copy their tribe.

That’s why advertisers work so hard to normalise your perception of the thing they want you to buy. They make it seem like everyone else is already buying/thinking/doing it.

It takes real strength of mind and social resilience to trust your own judgement and walk a different path.

Here’s a shocking example of how the Monkey-see > Monkey-do bias can work against you:

Many years ago, a train got stuck in a steep mountain tunnel and burst into flames. Almost all of the passengers ran together uphill, toward the light, to escape. They were all killed by fire and smoke, because heat and smoke rise. Just a very few placed their bet on running downhill, towards the fire, to get below it. They were the only ones who survived.

Your odds are reasonable when copying your tribe, but knowing when to think for yourself can save you too.

When good people do bad things

Then there’s classic denial, meaning wishful thinking or the La-la-la bias. OK, I made that up.

The horror and sickening sorrow of encountering evil in human nature can be so overwhelming that many people just choose to avert their gaze. This blinkering instinct can lead good people to cause great harm when they fail to question, or feel mysteriously compelled to follow, the bidding of a sociopath or incompetent at the top of a decision chain.

Even when a problem appears undeniable, the possibility of having harmed others is so unacceptable to kind souls that some do not allow themselves to acknowledge it, or even sometimes to perceive it.

So they continue causing harm. To stop would be to admit the harm already perpetrated, triggering a crisis of conscience or fear of punishment. It feels easier to just not go there.

Of course this is a perilous path to drag oneself along, because it inevitably gets worse. Harmful actions accumulate and come home to bite much harder eventually. En route, the reality-denier often loses their best defense, too — that they were unaware.

Importantly, there is no conspiracy at this level, just incompetence and weakness of character.

When smart people think stupid things

Even very academically clever people can get fixated on an incorrect idea and be quite unaware of the confirmation bias reinforcing it. Confirmation bias is when we prematurely adopt a point of view (usually an auto-belief) then subconsciously filter out contradictory evidence and ‘see’ only supporting evidence. It’s powerful and universal, requiring tremendous discipline to guard against.

Entire fields of science (and egos) can get stuck for decades on a central cult-like belief, quite unaware that it is just a belief or theory, not a fact.

Fixed facts feel so much more comforting to the psyche, no? But they are brittle. Far better to build your mental structures out of malleable materials that can flex to accommodate new information.

Expert bias — believing qualified people. Of course qualifications and experience really do help a lot, so this bias is thoroughly sensible. However, our education system makes considerable use of the trusted source shortcut — the simple relaying of facts (or beliefs dressed up as facts) — because that’s much quicker than getting a roomful of adolescents to think.

Consequently, some high-achievers are really just skillful auto-believers. They can absorb and regurgitate complex information, yet remain quite unaware and unskilled as thinkers.

Listen > Believe > Pass it on

We really need to teach young people the art of thinking, not just what to think.

Anyway, this means that even highly credentialed people can display poor judgement or rigid thinking and provide incorrect information. So we should not automatically trust what they say, either.

Also, everyone just gets things wrong sometimes. In fact…

We are usually wrong, so get used to it

One of the most disciplined rational minds of our time, the University of Stanford’s Professor John Ioannidis, formally demonstrated that most conclusions published in peer-reviewed research ultimately end up being wrong.

Hey, maybe even that’s wrong.

If the best available evidence is usually wrong, it’s because we don’t know what we don’t know and wisdom is a continually moving target.

This means that to be a good thinker you must build into your worldview an acceptance that what fallible human beings say — whether great experts, media mouthpieces, your savviest friends, or the mutterings of your own mind — may be functionally incorrect most of the time.

This is not a matter for judgement, criticism or ego. It’s just something to get comfortable with.

If you want to perceive reality more accurately — to become more skilled at homing in on the truth — then a calm* and open mind, with a commitment to reserving judgement, is the place to start.

—————

*Further reading: Your stress response also has a huge impact on your capacity to think straight. That’s such a large topic unto itself that it requires several articles to tackle. Look here to get started.